After graduating with a Bachelor's degree from the University of Science and Technology of China (USTC) in June 2024, I am now a Master's student in the Department of Physics at USTC. My research interests lie at the intersection of artificial intelligence and dynamical systems, specifically focusing on three interconnected directions:

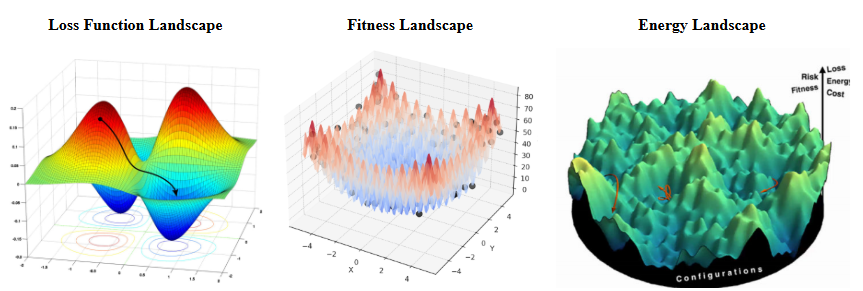

- Dynamical Systems of AI: understanding the training dynamics of deep learning from the perspective of dynamical systems. This includes studying the dynamics of optimizers such as SGD and Adam, as well as understanding training dynamics through the lenses of statistical physics and neural evolution. (Theory)

- Dynamical Systems for AI: designing novel AI algorithms inspired by real-world dynamical systems. This encompasses metaheuristic algorithms such as evolutionary computation and swarm intelligence, deep learning architectures like diffusion models and flow matching, as well as the development of new deep learning optimizers. (Algorithm)

- AI for Dynamical Systems: leveraging AI to solve various complex dynamical system problems. This includes using AI to accelerate the simulation of dynamical systems, as well as prediction and optimization tasks based on dynamical systems. (Application)

Education Background

University of Science and Technology of China

Bachelor of Mathematics and Applied Mathematics

University of Science and Technology of China, China

Master of Physics

IELTS: 7.0/9.0

Certificate: Plan for strengthening basic academic disciplines, Strengthening Foundation Plan in Mathematics

Conference Experience

The 40th AAAI Conference on Artificial Intelligence (AAAI) 2026, Poster Presentation

IEEE Congress on Evolutionary Computation (CEC) 2025, Oral Presentation

5th Amorphous Physics and Materials Symposium 2024, Attendee

3rd International Conference on Applied Mathematics, Modelling, and Intelligent Computing (CAMMIC 2023), Attendee

2023 4th International Conference on Computer Vision, Image and Deep Learning (CVIDL 2023), Attendee

2023 8th International Conference on Intelligent Computing and Signal Processing, Attendee

School Experience

2022: Mathematical Analysis B1, Teaching Assistant

2024: Mathematical Modeling, Teaching Assistant

Research Experience

Surrogate-Driven Multi-Objective Evolutionary Design of Battery Phase Change Materials, Cooperation Project at USTC

Advisor: Prof. Qiangling Duan

Discovery of Metallic Glasses Driven by Large Language Models and Graph Neural Networks, University Innovation Project at Songshan Lake Materials Laboratory

Advisor: Prof. Yuanchao Hu

Deep Neural Network-Based Control of Quantum Uncertain Systems, University Innovation Project at USTC

Advisor: Prof. Sen Kuang

Statistical Physics & Artificial Intelligence, Master Student at USTC

Advisor: Prof. Hua Tong

Evolutionary Algorithms & Machine Learning, Research Assistant at Wenzhou University

Advisor: Prof. Huiling Chen

Non-equilibrium Statistical Physics & Complex Networks, Research Assistant at USTC

Advisor: Prof. Binghong Wang

Software Copyright

KC-optimizer: An Integrated Interactive Platform for Metaheuristic Algorithms for Function Optimization V1.0 (Software Copyright, Registration No. 2024SR0164438)

Reviewer Experience

2024: Swarm and Evolutionary Computation (JCR Q1, IF: 8.2), Reviewer

2025: Knowledge-Based Systems (JCR Q1, IF: 7.2), Reviewer

2025: International Joint Conference on Neural Networks (IJCNN), Reviewer

2025: International Conference on Intelligent Computing (ICIC), Reviewer

2025: AAAI 2026, Reviewer

2025: Computers and Electrical Engineering (JCR Q1, IF: 4.9), Reviewer

2025: International Journal of Computational Intelligence Systems (JCR Q2, IF: 3.116), Reviewer

2025: Information Sciences (JCR Q1, IF: 6.8), Reviewer

2025: Neurocomputing (JCR Q1, IF: 6.5), Reviewer

2025: Scientific Reports (JCR Q1, IF: 3.9), Reviewer

2025: Engineering Reports (JCR Q2, IF: 2.0), Reviewer

2025: Biomedical Signal Processing and Control (JCR Q2, IF: 4.9), Reviewer

2025: Engineering Computations (JCR Q2, IF: 1.9), Reviewer

2025: Cluster Computing (JCR Q1, IF: 4.1), Reviewer

2025: Control and Decision (Chinese Core Journals, IF:3.012), Reviewer

2025: ISPRS Journal of Photogrammetry and Remote Sensing (JCR Q1, IF: 12.2), Reviewer

2025: Results in Engineering (JCR Q1, IF: 7.9), Reviewer

2025: Smart Agricultural Technology (JCR Q1, IF: 5.7), Reviewer

2025: Optik, Reviewer

2025: Advanced Engineering Informatics (JCR Q1, IF: 9.9), Reviewer

2026: IEEE WCCI 2026, Reviewer

2026: Mathematics (JCR Q1, IF: 2.2), Reviewer

2026: Discover Informatics, Reviewer

Skills

Language skills: Chinese (Native), English (Fluent)

Computer Skills: Microsoft Office 365, Python, MATLAB, MySQL, Java, C/C++, Lammps

Contact

Email: oykc@mail.ustc.edu.cn

Tel: +86 15888787619